Experiments

Run experiments to test how different versions of your website perform.

Setting up experiments

To create an experiment go to Experiments tab in your project and click on the Create experiment button.

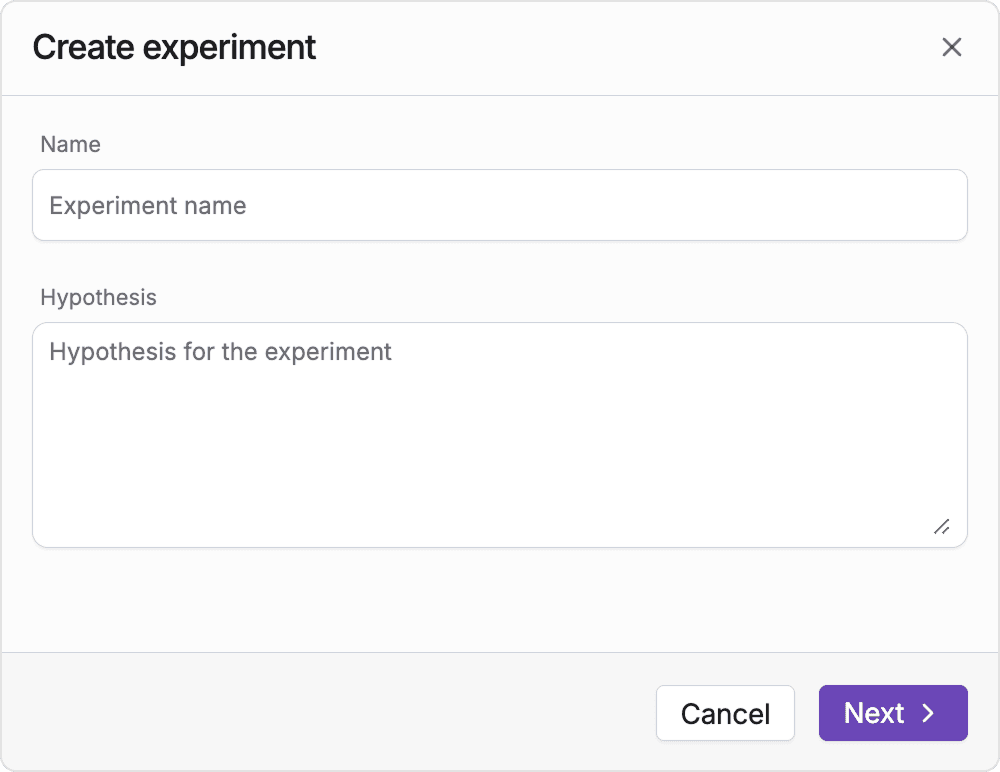

You will be asked to provide a name and hypothesis, fill those fields out and click on the Next button.

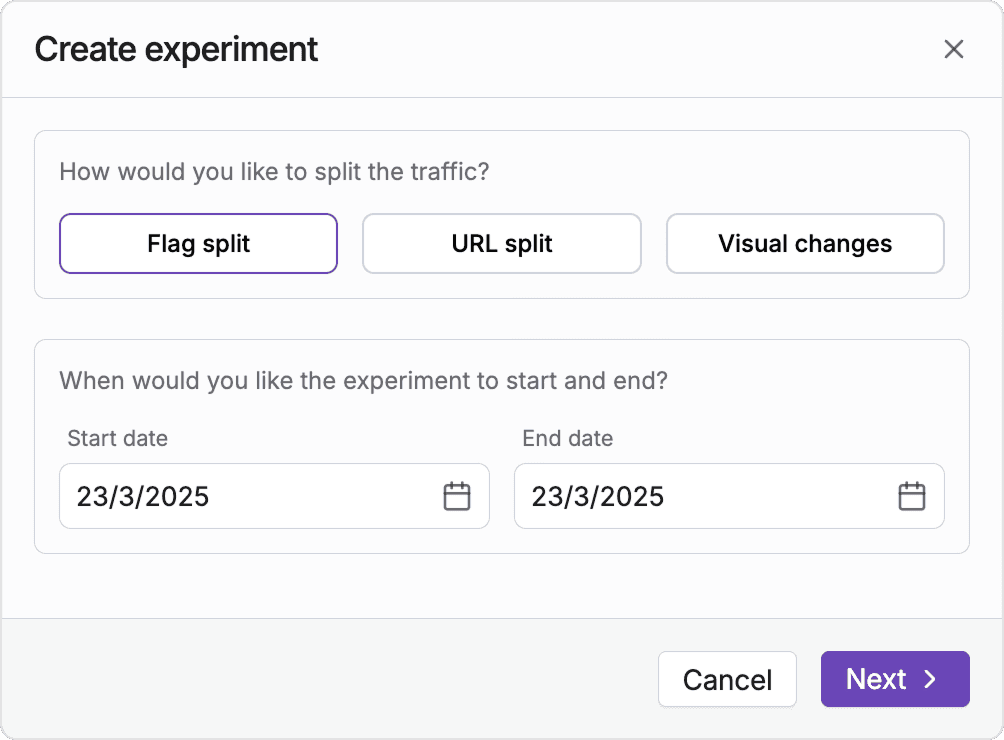

In the next step you need to select a start and end date of the experiment, data from those days will be used when calculating you experiment outcomes.

In addition to that you need to select how would you like to split your traffic, with FeaturesFlow you can either:

- Flag split - set up your variants in code and split traffic using a feature flag

- Visual editor - introduce changes with AI and test them against the current version of your website

- URL split - set up 2 different pages and compare their performance

Flag split

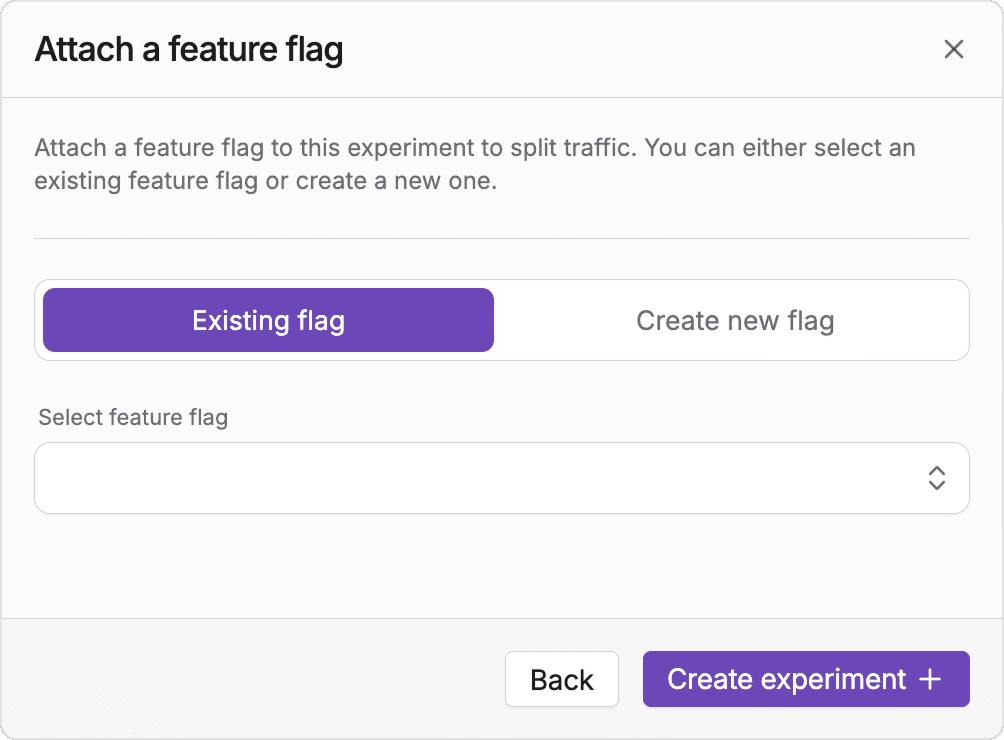

You will then be asked to attach a feature flag to your experiment:

You can either use an existing flag to split your traffic, or create a new one as part of experiment creation.

Once you have created the experiment, go ahead and use the flag in your code to split traffic.

Make sure to also trigger some events to measure your experiment's impact.

Measuring impact

To assess if your experiment is successful you need to attach metrics to your experiment.

FeaturesFlow distinguishes two different types of metrics:

- Success metrics - the metrics that define if your experiment is succesful or not

- Guardrail metrics - secondary metrics that you don't expect to be significant, but you want to make sure there is no negative impact

You can attach metrics to you experiment in the Configuration tab.

Note

Running exeriments with a feature flag doesn't automatically track events. Remember to trigger them manually or set up a GA4 integration.